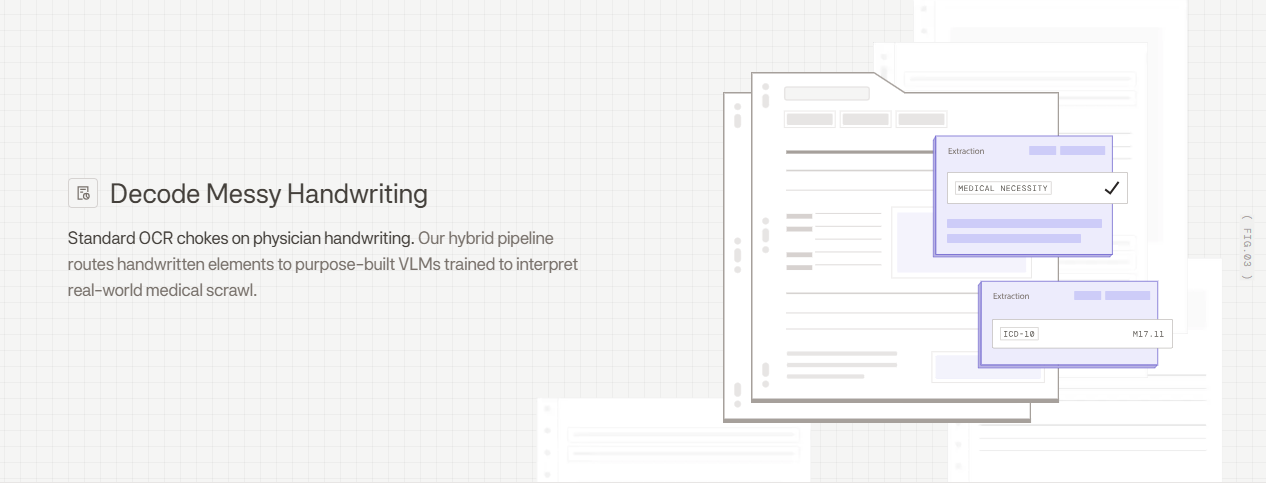

Your revenue cycle team knows exactly how much a single misread procedure code costs when claims get denied three weeks later. Standard OCR for physician handwriting struggles with medical scrawl because it processes characters in isolation without understanding that "bid" is a dosing instruction or that certain drug names only make sense in specific clinical contexts. The fix requires hybrid systems that detect document layouts, route handwritten sections through VLMs trained on medical terminology, and apply validation layers that catch dosing inconsistencies before bad data reaches your EHR.

TLDR:

- Modern VLMs achieve 85-95% accuracy on physician handwriting vs 65-78% for traditional OCR

- Hybrid pipelines route printed text through fast OCR and handwritten sections to VLMs for 95%+ accuracy

- Illegible handwriting causes 1.5 million injuries annually and accounts for 61% of medication errors

- Validation layers apply clinical logic to catch dosing errors and drug name ambiguities before EHR entry

- Extend uses agentic OCR routing and VLMs trained on medical scrawl to parse prior auth forms and patient charts

Why Traditional OCR Fails on Physician Handwriting

Standard OCR relies on pattern matching against known letter and word shapes. This approach handles printed text effectively but fails on physician handwriting for several fundamental reasons.

Medical notes contain ligatures where letters connect unpredictably, inconsistent letter heights, and contextual variations where identical letters appear different across words. Traditional OCR engines can't resolve these ambiguities because they lack semantic understanding of medical terminology. A scribbled "mg" might be interpreted as "mcg," creating a 1,000x dosing error.

The consequences are severe. Misread sloppy handwriting causes 1.5 million injuries annually. Research shows that illegible handwriting and transcription errors account for up to 61 percent of medication errors in hospitals.

Character-level OCR models also struggle with spatial layouts common in clinical forms, where handwritten notes appear in margins, at rotated angles, or overlapping printed text.

The Hidden Costs of Physician Handwriting in Healthcare Operations

Illegible physician handwriting creates cascading business problems beyond patient safety risks. Every misread prescription or clinical note triggers manual verification workflows that slow down care delivery and increase administrative burden.

Prior authorization forms require multiple rounds of communication when handwritten fields can't be read accurately. Staff spend hours contacting physicians to clarify dosages, diagnoses, or treatment plans, which delays approvals and creates friction in the revenue cycle as claims sit in pending status.

Patient chart reviews become bottlenecks during care transitions or specialist referrals. When intake staff can't decipher previous treatment notes, they either delay appointments for clarification or proceed with incomplete information, reducing throughput and creating compliance exposure.

The financial impact accumulates across thousands of documents processed weekly through extended processing times, increased administrative headcount, and delayed reimbursements.

Vision Language Models vs Traditional OCR for Medical Documents

Vision language models process entire document regions at once, analyzing spatial relationships between handwritten text, printed labels, form structures, and medical diagrams. This contextual awareness helps VLMs disambiguate illegible characters by understanding surrounding clinical information.

Traditional OCR isolates individual characters and attempts to match them against learned patterns. VLMs understand medical terminology, anatomical references, and standard clinical abbreviations, allowing them to infer partially obscured text based on clinical logic. When processing a prescription, a VLM recognizes that "bid" or "tid" are dosing instructions instead of random letter combinations.

Document structure awareness matters for medical forms where handwriting appears across checkboxes, tables, and margins. VLMs parse these layouts as complete units instead of treating each text region as isolated data. A 70% accuracy rate means three out of every ten extracted values require manual correction, and for compliance-heavy industries like healthcare and financial services, near-perfect extraction is critical as errors can cascade into compliance issues, billing mistakes, or patient safety concerns.

| Capability | Traditional OCR | Vision Language Models (VLMs) |

|---|---|---|

| Accuracy on physician handwriting | 65-78% | 85-95% |

| Processing approach | Character-level pattern matching | Contextual analysis of entire document regions |

| Medical terminology understanding | Limited - treats text as generic characters | Strong - trained on clinical abbreviations and drug names |

| Spatial layout handling | Struggles with margins, rotations, and overlapping text | Parses complete layouts including checkboxes and tables |

| Ambiguity resolution | Cannot infer unclear characters from context | Uses surrounding clinical information to disambiguate |

| Best use case | Clean printed text on standard documents | Cursive handwriting and complex medical forms |

Common Document Types That Require Handwriting Recognition in Healthcare

Prescriptions capture dosages, frequencies, and drug names where a single misread character changes clinical meaning. Misinterpreted zeros and decimal points lead to tenfold medication errors, while similar drug names require context to distinguish correctly.

Prior authorization forms combine checkboxes, diagnosis codes, and handwritten justifications. Incomplete extraction delays approvals when payers cannot validate medical necessity without accurate handwritten sections.

CMS-1500 and claim forms include handwritten procedure codes, modifiers, and diagnosis details in fixed box layouts. Misread codes trigger denials or compliance flags during audits. Intelligent document processing helps automate these complex forms.

Clinical notes contain free-form observations, treatment plans, and patient history spanning margins and blank spaces. These unstructured narratives require parsing handwriting alongside printed vitals.

Patient intake and consent forms capture insurance information, medical history, and emergency contacts in cursive or print. Incomplete extraction creates data quality issues across EHR systems.

How Hybrid OCR Pipelines Handle Medical Scrawl

Hybrid OCR pipelines route document regions through different model types based on content characteristics detected during layout analysis. A layout detection model first identifies printed text blocks, handwritten annotations, form fields, tables, and checkboxes across each page. This initial classification step determines which processing path each region follows.

Printed text regions proceed through fast OCR engines optimized for typed characters. Handwritten sections route to VLMs that interpret cursive and irregular writing using surrounding context. Form fields containing checkboxes or character-per-box inputs use specialized detection models tuned for structured layouts.

Agentic orchestration manages this routing by analyzing confidence scores from each model. When standard OCR returns low confidence on a text region, the system reroutes that section to a VLM for reprocessing. This adaptive approach balances speed and accuracy by reserving compute-intensive models for regions that need them while processing straightforward printed text quickly.

Accuracy Benchmarks for Handwriting Recognition in Medical Documents

Accuracy rates for physician handwriting recognition vary based on processing approach and document characteristics. Traditional OCR engines achieve around 65-78% accuracy on cursive medical notes, while specialized VLMs reach 85-95% on the same content. Hybrid pipelines combining multiple models push accuracy above 95% for most clinical documents.

Scan quality directly impacts recognition rates. Clean 300 DPI scans from dedicated scanners produce higher accuracy than mobile phone captures with shadows or skew. Handwriting consistency matters more than legibility. A physician with terrible but consistent writing yields better results than one whose style varies across documents. Medical terminology density also affects performance, as rare drug names and specialized abbreviations create more ambiguity for models to resolve.

Building Validation Layers for Clinical Document Processing

Confidence scoring flags uncertain extractions before they reach downstream systems. Multi-pass review agents analyze each extracted value and assign scores based on model agreement, character clarity, and field context. Values below defined thresholds route to human reviewers instead of proceeding directly into EHR systems.

Field-level validation applies clinical logic to catch errors OCR models miss. Dosage validators check that extracted quantities fall within safe ranges for specific medications. Drug name validators compare extracted text against formulary databases to catch near-miss transcriptions. Diagnosis code validators verify ICD-10 codes follow proper formatting rules.

Human-in-the-loop review interfaces surface flagged fields alongside source document regions with bounding boxes showing exactly where values were extracted. Reviewers correct errors directly in the interface, and those corrections feed back into training pipelines to improve future performance on similar documents. Correction patterns reveal systematic model weaknesses that become training data for refinement.

Extend's Approach to Physician Handwriting Recognition

Extend's hybrid OCR pipeline routes handwritten sections through specialized VLMs trained on medical scrawl while processing printed text through fast engines. Agentic orchestration analyzes each document region during layout detection and selects the appropriate model path based on content characteristics.

For prior authorization forms with handwritten justifications, Extend's parser detects cursive annotations, routes them to VLMs with medical context understanding, and extracts dosages, diagnoses, and treatment rationale. The system processes patient charts using smart chunking that preserves clinical context across pages without hitting token limits.

Validation layers apply medical logic to flag dosing inconsistencies or drug name ambiguities before outputs reach EHR systems. Review interfaces show extracted values alongside source regions with precise bounding boxes, allowing clinical staff to correct uncertain interpretations. These corrections train the system to handle similar handwriting patterns automatically.

Healthcare organizations use Extend to convert decades of handwritten records into searchable data, parse EOBs with manual adjustments, and decode physician signatures on compliance forms.

Final Thoughts on Processing Physician Handwriting

Medical handwriting recognition has moved past the 60% accuracy rates that made automation impractical for healthcare operations. Hybrid pipelines using OCR for physician handwriting can process your prior authorizations, prescriptions, and clinical notes at accuracy levels that actually reduce manual work instead of creating more review tasks. Your team gets structured data from documents without building custom models or managing training infrastructure.

FAQ

What makes VLMs more accurate than traditional OCR for physician handwriting?

VLMs process entire document regions at once and understand medical terminology, allowing them to disambiguate illegible characters using surrounding clinical context. Traditional OCR isolates individual characters without semantic understanding, which fails on medical scrawl where identical letters appear different across words and clinical abbreviations require context to interpret correctly.

What accuracy rate should you expect when processing physician handwriting?

Hybrid pipelines combining VLMs with traditional OCR achieve 95%+ accuracy on most clinical documents, compared to 65-78% for standard OCR alone. Scan quality, handwriting consistency, and medical terminology density all impact performance, with clean 300 DPI scans from dedicated scanners producing the best results.

How do you implement a hybrid OCR pipeline for medical documents?

Start with layout detection to classify printed text, handwritten sections, and form fields, then route each region to the appropriate model type. Printed text goes through fast OCR engines while handwritten sections route to VLMs trained on medical terminology, with agentic orchestration analyzing confidence scores to reroute low-confidence extractions for reprocessing.

Can validation layers catch dosing errors before data reaches your EHR?

Yes, field-level validation applies clinical logic to verify extracted dosages fall within safe ranges for specific medications, checks drug names against formulary databases, and confirms diagnosis codes follow proper ICD-10 formatting. Human-in-the-loop review interfaces surface flagged fields alongside source document regions for correction when confidence scores fall below defined thresholds.

Which document types benefit most from VLM-based handwriting recognition?

Prescriptions with handwritten dosages and drug names see the biggest accuracy gains, followed by prior authorization forms with cursive justifications and clinical notes with free-form observations. CMS-1500 claim forms and patient intake forms with mixed printed and handwritten content also process more accurately through hybrid pipelines than traditional OCR alone.