You've built a document extraction pipeline that works great on sample PDFs. But when your team runs it against the messy invoices, contracts, and forms customers actually send, accuracy tanks and nobody knows which fields are breaking. Academic benchmarks compare foundation models on standardized datasets, but they won't tell you if your system handles signature detection or preserves table structure on your documents. The accuracy testing tools that matter measure extraction quality on real customer files, surface failure patterns at the field level, and feed corrections back into optimization loops so your system improves instead of staying broken.

TLDR:

- Document AI evaluation tools measure extraction accuracy across PDFs, forms, and invoices using field-level metrics like precision, recall, and CER

- Production systems need continuous benchmarking against real documents, not just static academic datasets

- Academic benchmarks like OmniDocBench and IDP Leaderboard compare models but lack production workflow integration

- Extend provides built-in evaluation with automated accuracy reports, schema versioning, and self-optimizing agents that achieve 99%+ accuracy

- Extend combines evaluation infrastructure with human review loops and continuous improvement, enabling teams to ship mission-critical document AI in days

What Are Evaluation and Benchmarking Tools for Document AI?

Evaluation and benchmarking tools for document AI measure how well systems extract data from PDFs, forms, invoices, contracts, and other business documents. These tools test accuracy across real-world files, comparing extracted outputs against verified ground truth to calculate precision, recall, and field-level error rates.

Unlike general AI evaluation frameworks that focus on chatbot responses or code generation, document AI tools need to handle specific challenges: OCR quality on scanned images, table extraction across complex layouts, handwriting recognition, and multi-page data merging. A document processing system might nail text classification but fail catastrophically on invoice line items or form checkboxes.

The best evaluation tools run automated tests against customer documents, generate detailed accuracy reports, and help teams identify where models break down. They answer questions like: Does the system correctly extract payment terms from a contract? Can it handle redlined text or strikethroughs? What happens when invoice formats change?

Without proper benchmarking, teams ship document AI systems blind. They discover accuracy problems only after production failures cost money and trust. Quality evaluation tools catch these issues early, letting engineers iterate faster and deploy with confidence.

How Document AI Evaluation Tools Are Ranked

Ranking document AI evaluation tools requires looking at what matters when testing production systems: measurement depth, metric flexibility, real-world coverage, and workflow fit.

The best tools go beyond simple accuracy percentages. They break down performance at field, document, and dataset levels, showing exactly where extraction fails. Precision and recall matter, but so do field-level metrics like Character Error Rate (CER) for OCR quality, Word Error Rate (WER) for text accuracy, and specialized scores like Tree Edit Distance Similarity (TEDS) for table structure evaluation. Normalized Information Distance (NID) helps measure semantic similarity between extracted and expected outputs.

Edge case handling separates serious evaluation tools from basic test harnesses. Can the tool benchmark performance on multi-page tables, handwritten fields, redlined text, checkboxes, and signature detection? Does it track accuracy across document variants within the same type, like different invoice templates?

Integration capabilities determine whether evaluation becomes a bottleneck or an accelerator. Tools that connect with existing CI/CD pipelines, support automated regression testing, and provide programmatic access let teams run continuous benchmarks as documents and schemas evolve.

The most valuable evaluation solutions create feedback loops. They don't just report accuracy scores; they help teams identify failure patterns, track improvements over time, and feed corrections back into training data or prompt refinement processes.

Best Overall Evaluation and Benchmarking Tools for Document AI: Extend

Extend provides the most complete evaluation and benchmarking suite built directly into its document processing toolkit. Teams can test accuracy across representative document sets, evaluate configuration changes, and deploy with confidence using automated accuracy reports at field and document levels.

What Extend Offers

- Built-in evaluation sets to continuously test extraction accuracy on real customer documents with validated outputs

- Automated accuracy reports with field-level confidence scoring and document-level metrics

- Custom evaluation scoring supporting AI-as-a-judge, vector similarity, and fuzzy matching for flexible quality measurement

- Composer AI agent that automatically runs optimization loops against evaluation sets to achieve 99%+ accuracy in minutes

- Human-in-the-loop review UI with corrections feeding directly into eval sets for continuous improvement

Why Extend Leads

Extend treats evaluation as a core workflow primitive rather than an afterthought. The evaluation suite integrates with schema versioning, allowing teams to test changes safely before production deployment. The Composer agent uses evaluation data to iteratively improve extraction logic, creating a self-optimizing system that learns from each correction. Documented case studies show customers achieving 99%+ accuracy on complex financial and real estate documents while eliminating manual review entirely.

Extend combines state-of-the-art accuracy with evaluation infrastructure purpose-built for production document AI, enabling teams to ship mission-critical use cases with measurable quality guarantees.

OmniDocBench

OmniDocBench is an academic benchmark dataset accepted at CVPR 2025 that provides detailed annotations for evaluating document parsing across diverse PDF types.

What They Offer

- Dataset of 1,403 PDF files with detailed annotations for layout, text, formulas, and tables across multiple document types

- TEDS (Tree Edit Distance Similarity) metric for evaluating table extraction accuracy based on structural and textual alignment

- Comprehensive evaluation framework covering layout detection, OCR, table extraction, and reading order preservation

- Public benchmark with reproducible evaluation methods available through GitHub repository

Good for: Academic researchers and teams building document parsing models who need standardized evaluation datasets to validate improvements against published baselines.

Limitation: Does not provide production-ready evaluation infrastructure or workflow integration. Teams must manually implement evaluation pipelines, manage ground truth datasets, and build their own human review systems. The benchmark focuses on parsing accuracy but lacks support for end-to-end document processing workflows, confidence scoring, schema versioning, or continuous improvement loops that production systems require.

Bottom line: OmniDocBench offers valuable standardized evaluation data for document parsing research, but Extend delivers a complete production evaluation suite with automated optimization and workflow integration for teams shipping real-world document AI.

IDP Leaderboard

IDP Leaderboard is a benchmark created by Nanonets that evaluates vision-language models across six core intelligent document processing tasks.

What They Offer

- Evaluation across six tasks including OCR, key information extraction, document classification, VQA, table extraction, and long document processing

- Coverage of 16 diverse datasets spanning more than 9,000 documents to reflect real-world challenges

- Aggregated scoring methodology that provides holistic performance views across tasks

- Public leaderboard comparing multiple models with plans to add confidence score calibration evaluation

Good for: Teams comparing off-the-shelf vision-language models for document understanding tasks who want to understand relative model performance across standardized datasets.

Limitation: Provides model-level benchmarking but does not offer tooling for evaluating custom extraction schemas, document-specific accuracy, or production workflows. Teams cannot test their own documents, create custom evaluation sets, version schemas, or integrate evaluation into CI/CD pipelines. The leaderboard compares general model capabilities but lacks support for schema optimization, human review workflows, or continuous improvement loops needed for production document AI systems.

Bottom line: IDP Leaderboard helps compare foundation model capabilities, but Extend provides the evaluation infrastructure teams need to optimize, monitor, and continuously improve their specific document extraction workflows in production.

Mindee OCR Benchmark Tool

Mindee provides a free OCR benchmarking utility that tests text extraction accuracy, speed, and error rates across different OCR providers using standardized datasets.

What They Offer

- Free benchmark tool testing OCR accuracy with metrics like exact match rate and word error rate

- Performance comparison across multiple OCR providers for speed and accuracy trade-offs

- Support for different document types including invoices, receipts, and contracts

- Tutorial and guidance for running structured benchmarking tests

Good for: Teams in early evaluation stages comparing basic OCR accuracy across vendors for simple text extraction use cases with clean document scans.

Limitation: Focuses exclusively on raw OCR text extraction without structured data extraction, schema-based field evaluation, or document understanding capabilities. Does not support evaluating table extraction accuracy, document classification, confidence scoring, or complex layout preservation. Lacks integration with production workflows, version control, human review loops, or automated optimization. Cannot benchmark end-to-end document processing pipelines, multi-step extraction logic, or downstream data quality metrics.

Bottom line: Mindee's benchmark tool helps with initial OCR vendor selection, but Extend delivers full evaluation for structured extraction, tables, confidence scoring, and complete document processing pipelines with continuous optimization.

Docling

Docling is an open-source document processing framework from IBM Research that handles complex business documents with focus on table structure preservation and processing speed.

What They Offer

- Open-source framework for PDF processing with DocLayNet and TableFormer models for layout understanding

- High text extraction accuracy with superior table structure preservation capabilities

- Moderate processing speeds with linear scaling from single pages to 50-page documents

- Table of contents reconstruction for document navigation

Good for: Engineering teams building custom document processing pipelines who need an open-source foundation with strong table handling and are willing to build their own evaluation infrastructure.

Limitation: Provides document parsing capabilities but no built-in evaluation suite, benchmarking tools, or accuracy measurement infrastructure. Teams must manually create evaluation datasets, implement custom accuracy metrics, and build ground truth management systems. Lacks schema versioning, confidence scoring, human review interfaces, automated optimization agents, field-level accuracy reporting, or regression testing support.

Bottom line: Docling offers solid open-source parsing, but Extend provides the complete evaluation, optimization, and quality assurance infrastructure needed for production-grade document AI with measurable accuracy guarantees.

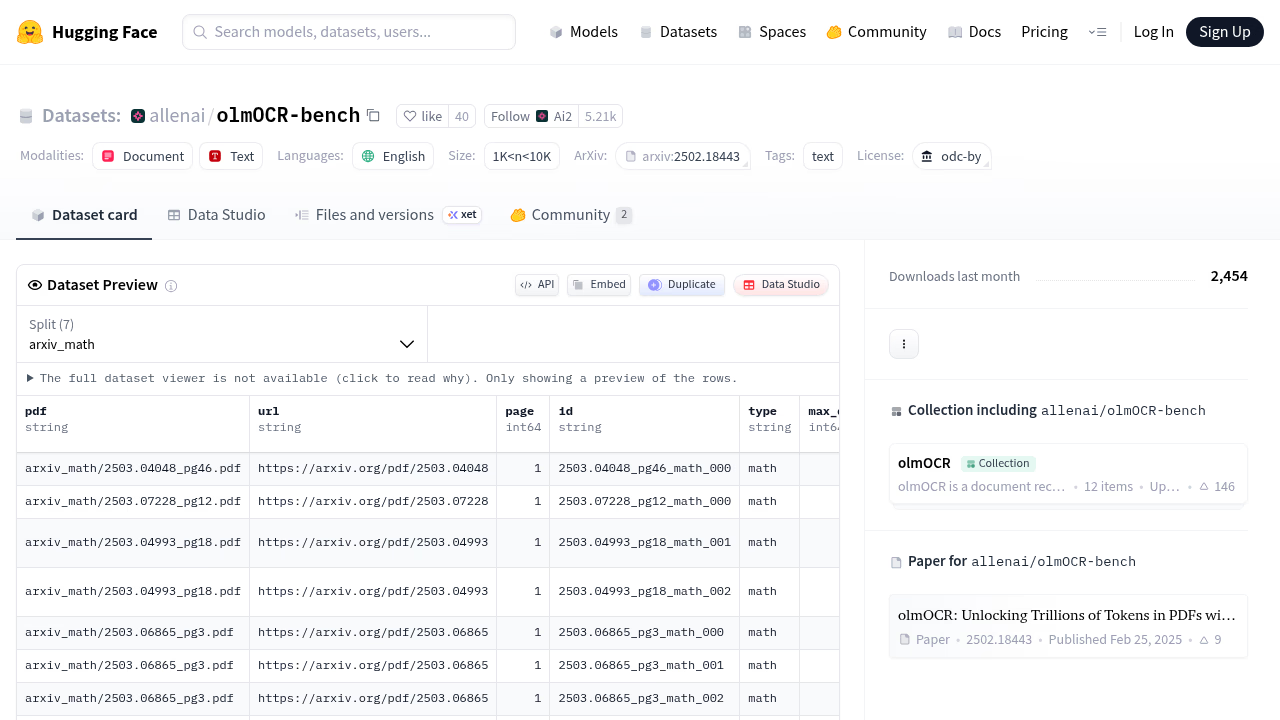

olmOCR-Bench

olmOCR-Bench is an evaluation dataset and framework from the Allen Institute for AI featuring 1,403 PDF files and 7,010 unit test cases designed to measure OCR system performance.

What They Offer

- Dataset with unit test cases capturing properties of high-quality OCR output

- Benchmark for evaluating OCR systems converting PDF documents to markdown format

- Focus on preserving textual and structural information with reproducible evaluation methodology

- Open-source framework with scoring for academic research and model development

Good for: AI research teams developing novel OCR models who need standardized test cases to validate text recognition improvements against published baselines.

Limitation: Provides static evaluation datasets but no production infrastructure, workflow integration, or continuous monitoring. Does not support custom document evaluation, schema-based extraction testing, field-level accuracy measurement, confidence scoring, or human review workflows. Lacks automated optimization, version control, regression testing, or CI/CD integration. Designed for OCR model evaluation rather than complete document understanding pipelines.

Bottom line: olmOCR-Bench serves OCR researchers well, but Extend delivers the evaluation suite production teams need to continuously measure, optimize, and improve end-to-end document processing accuracy.

Feature Comparison Table of Document AI Evaluation Tools

The table below compares core evaluation features across the tools covered in this guide, highlighting which solutions provide production-ready infrastructure versus academic benchmarks or basic OCR testing utilities.

| Feature | Extend | OmniDocBench | IDP Leaderboard | Mindee Benchmark | Docling | olmOCR-Bench |

|---|---|---|---|---|---|---|

| Built-in evaluation sets | Yes | No | No | No | No | No |

| Automated accuracy reports | Yes | No | No | Yes (basic) | No | No |

| Custom evaluation scoring | Yes | No | No | No | No | No |

| Schema versioning support | Yes | No | No | No | No | No |

| Confidence scoring evaluation | Yes | Planned | Planned | No | No | No |

| Human review workflow | Yes | No | No | No | No | No |

| Automated optimization | Yes | No | No | No | No | No |

| Production workflow integration | Yes | No | No | No | No | No |

| Field-level accuracy metrics | Yes | No | No | No | No | No |

| Table extraction evaluation | Yes | Yes | Yes | No | Yes | No |

| Continuous improvement loops | Yes | No | No | No | No | No |

| API integration | Yes | No | No | No | Yes | No |

Why Extend Is the Best Evaluation and Benchmarking Solution for Document AI

Extend stands apart by treating evaluation as infrastructure that powers the entire document processing lifecycle. While other solutions offer static benchmarks or isolated testing utilities, Extend's evaluation suite drives active optimization through its Composer agent, feeds corrections back into schema refinement, and integrates with version control to prevent regression.

This closed-loop architecture creates a self-improving system. When the Review Agent flags low-confidence fields, human corrections automatically update evaluation sets and trigger Composer to reoptimize extraction logic. Schema changes get tested against existing evaluation data before deployment, preventing production breaks that plague teams using disconnected testing tools.

The results speak clearly. Customers process millions of mission-critical documents at 99%+ accuracy without manual review queues, shipping production systems in days instead of months. Academic benchmarks and vendor comparison tools help with initial model selection, but they cannot optimize your specific documents, learn from your corrections, or integrate with your deployment pipeline.

For teams building production document AI where accuracy directly impacts revenue and compliance, Extend delivers the only evaluation solution that measures, optimizes, and guarantees quality at scale.

Final Thoughts on Testing Document Processing Accuracy

The quality of your document AI evaluation directly impacts how fast you can ship and how confident you feel about production accuracy. You want tools that measure performance on your actual documents, catch regressions automatically, and turn human corrections into continuous improvements. Generic benchmarks tell you which foundation models perform well on standardized datasets, but real-world document processing needs evaluation infrastructure that tests your extraction schemas and prevents the accuracy drops that break customer trust.

FAQ

How do you choose the right evaluation tool for your document AI system?

Choose based on your deployment stage and accuracy needs: production teams processing mission-critical documents need integrated evaluation suites with automated optimization and human review workflows, while research teams comparing baseline models can use academic benchmarks like OmniDocBench or IDP Leaderboard.

What's the difference between OCR benchmarking and full document AI evaluation?

OCR benchmarking measures raw text extraction accuracy using metrics like word error rate, while full document AI evaluation tests structured data extraction across fields, tables, confidence scoring, and multi-page processing with schema-based accuracy measurement.

Which evaluation approach works best for teams without dedicated ML engineers?

Tools with built-in evaluation sets, automated accuracy reports, and visual review interfaces require less technical overhead than academic benchmarks or open-source frameworks that demand custom metric implementation and ground truth dataset management.

Can you run continuous accuracy testing as document schemas evolve?

Production-ready evaluation platforms support schema versioning and regression testing through CI/CD integration, letting teams validate extraction changes against existing accuracy baselines before deployment, while static benchmarks require manual re-testing.

When should you switch from vendor comparison benchmarks to custom evaluation?

Move to custom evaluation once you've selected base models and need to optimize extraction for your specific document types, field schemas, and accuracy requirements that generic benchmarks cannot measure.